The excitement around quantum computing is undoubtedly contagious. Developments in the quantum computing hardware industry, on the cybersecurity front, and in other areas have made the world of quantum computing a fascinating universe of possibilities.

Of course, the looming question of whether quantum “speedups” — which refer to a computational advantage over regular (classical) computers — exists remains to be definitely proven for all cases. Nevertheless, developments over the past few decades have still shown promising results (take, for instance, Shor’s quantum factorization algorithm, with an estimated exponential advantage).

There continue to exist, however, NP-complete optimization problems that are in need of significantly more efficient algorithms. For some of these cases, finding an effective, better quantum algorithm capable of returning a precise solution is, to put it simply, difficult.

Below, we explore several major concepts underlying a major quantum computing-based optimization algorithm.

Why Bother With Optimization Algorithms?

As the name suggests, quantum optimization algorithms quite literally are “algorithms that utilize the processes of quantum computers to solve optimization problems.” I have unabashedly given you absolutely no information in this definition, but names in this area tend to be a little lacking in the creative aspect (no offense intended).

It is, however, worth noting that a large part of the potential for quantum speedups comes from the ability to simulate large datasets and approximate the optimal outcome. In order to fully understand the problem, however, we begin with the field of optimization.

Why is Optimization So Difficult?

In general, however, optimization problems have historically been difficult to approach as the solutions tend to require a rather large running time, or time complexity, especially in the worst case scenarios.

In case you are unfamiliar with the concept, “time complexity” is a method of describing precisely how long an algorithm will take as a function of its input(s). In other words, we are considering how complex the solution to a problem is and are simply asking the algorithm how many loops it will take to process the data relative to the input.

For example, an algorithm that takes the exact same amount of time to process information regardless of the input (such as a print statement in Java or Python) will have O(1) running time, or constant time complexity, since it can be upper-bounded by a constant.

On the other hand, consider an algorithm that attempts to find some item in a list of unsorted objects. An algorithm that iterates (goes through) every item in this list will have a complexity of O(n), where n is the size of the list, since the running time can at most be upper-bounded by some constant times the number of items in the list. This “big-O” notation merely notes that this is an upper bound.

We now return to our discussion of optimization problems. While any brute-force algorithm that attempts to test all possible solutions to find the best (optimal) solution may work rather well on a fast computer and small input size, most industries are looking to have computers solve problems of sizes few humans are able to easily comprehend.

Consequently, brute-force algorithms may take longer than several human lifespans to finish calculating certain problems, even when run on the world’s fastest supercomputers.

The Terrifying Problems of Humanity: An Example Optimization Problem

The area of optimization problems is, in itself, a wide-ranging set of scenarios that can be applied to an infinite number of situations. Of course, algorithms must be tailored to fit these scenarios, but, depending on the problem and type of data at hand, the algorithms will generally vary.

One popular example of an optimization problem is the traveling salesman problem (alternatively called the traveling politician problem) and is named for the daunting task of planning out the shortest path the salesman (or politician) can take to visit all the cities on his or her list.

As any good secretary might know, merely throwing the names of the cities into a hat and pulling them out one by one to determine the order will not suffice. This method will certainly ensure the salesman visits every city, but the cost of travel — the distance between one point and the next — is not optimal.

After all, it is all too possible that the salesman is scheduled to travel to Juneau, Alaska Monday, then Tampa, Florida then Tuesday, and then back up to, perhaps, Seattle, Washington on Wednesday. In the span of merely three days, the poor salesman would have travelled diagonally across America twice.

The traveling salesman problem can be reduced into a bit of a simpler problem where, given a set of points on a graph, one attempts to find the shortest path that traverses all the points (vertices) in the set.

This concept of simplifying a problem down to its most basic components — in this case, a two-dimensional graph with several vertices and edges — allows for the optimization problems to be approached not only from a more abstract perspective but also easily applied to a variety of cases.

The Maximum Cut Problem

The consideration of graphs and whatnot quite handily brings about the question of whatever else can we do with sets of points and lines. The field of graph theory, quite a beauty in itself, wonderfully demonstrates this question. Among the graph theory-based optimization problems lies one based on coloring: the max-cut problem.

Graph coloring is a dilemma in itself, even with a kindergarten diploma in crayons — the concept is considerably more complex in establishing behavior. Each vertex (that is, a point) in a graph (a set of vertices and edges, the lines between the points) can be assigned a color of some sort. Mathematicians, over the years, have attempted to apply various constraints and find patterns among the graphs.

One rather curious question that has arisen is as follows:

Consider some graph of V vertices and E edges, where V and E are integers. We will temporarily assume there are no loops, where a single edge loops back to connect to the same vertex it started at, or parallel edges, where more than one edge exists between the same pair of vertices.

We attempt to color this graph with 2 distinct colors, c₁ and c₂ such that each vertex is assigned a color. This allows for us to divide the entire set of vertices into two disjoint sets, such that no vertex with color c₁ is also assigned color c₂ and vise versa (this statement may seem obvious, but we have just created a bipartite graph).

The problem is as follows: How can we color this graph such that the maximum number of edges between these two disjoint sets is achieved?

This problem, known as the maximum cut problem, may appear to be quite straightforward, but how could one find the optimal solution without testing every possible solution? A light skim of the situation will reveal that the max-cut problem is, in fact, a NP-hard problem, and, thus, effective and efficient algorithms are still in the works.

Enter The Quantum Approximate Optimization Algorithm

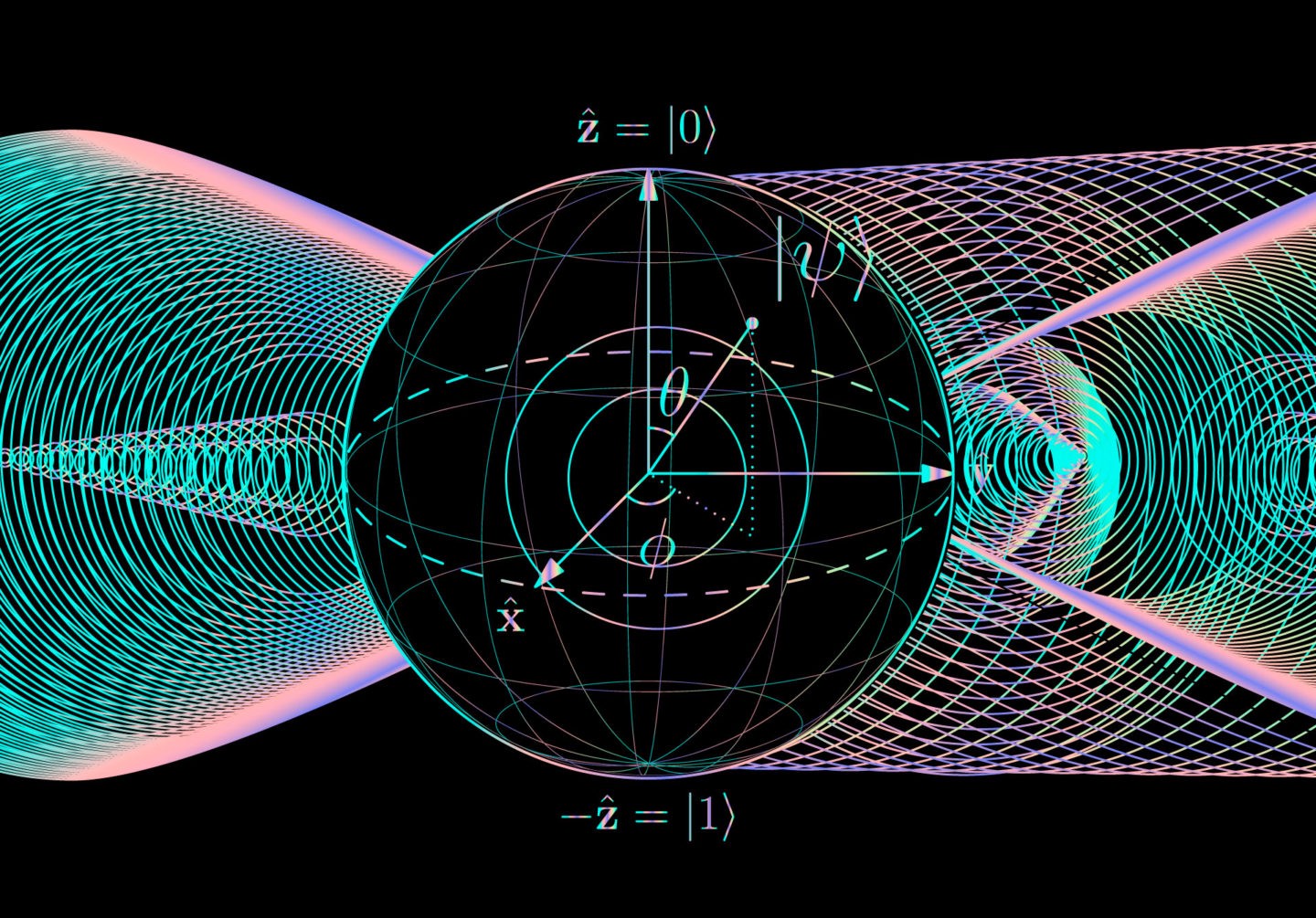

Better known as QAOA (not to be confused with quinoa), the Quantum Approximate Optimization algorithm was first proposed by Edward Farhi, Jeffrey Goldstone, and Sam Gutmann in 2014. The algorithm itself was aimed at solving combinatorial optimization problems, more specifically the max-cut problem introduced above, using a mixture of quantum bit representations and classical algorithms.

How does the QAOA function? At a high level, random strings, representing the probable solutions, are sampled based on the distribution representing the inputs. The average of these results are taken based on the number of samples, and an expected value is calculated, allowing for additional calculations (using a classical algorithm) to be run to determine the optimal solution.

Its introduction first caught the attention of numerous researchers and industry, as it was a prominent step among the new developments in technology — and quantum computing — that occurred that year. The algorithm held the title of best known approximation ratio (ratio between the solution achieved and the actual, optimal solution) at the time of the paper’s release. While its existence as “best” was brief, as a team was able to develop a classical algorithm deemed more effective roughly a year later, the QAOA remains on the podium as a significant optimization algorithm. Regardless, the hope is that the QAOA can be applied to problems relating to, for example, circuit design, statistical analysis (e.g. physics), data science, and more.

Of course, this algorithm is not the only quantum computing-based approach to optimization problems. In fact, quantum computing calculations have also functioned as the inspiration for classical algorithms.

Adiabatic Quantum Computing and Quantum-Inspired Algorithms

Many quantum-inspired algorithms are modelled after adiabatic quantum computing. The term “adiabatic” is often used to refer to pressure systems. In this case, adiabatic quantum systems begin by being set in their lowest energy state.

Over time, little by little, this quantum system is altered to represent a more complex system. However, the relatively slow rate theoretically gives the system time to adapt and remain in the lowest possible energy configuration.

This is analogous to squeezing the air out of a balloon. Both rapidly and slowly squeezing it will alter the state, but immediately squashing the balloon will likely pop it, creating a mess of sorts. Slowly changing the balloon’s state, however, will allow for the air to gradually leak out of the balloon, allowing it to reach an unfilled state, albeit with some minor changes.

This bodes well for optimization problems, as the input can be altered such that this “lowest energy configuration” is representative of the optimal solution. Classical algorithms seek to replicate this act of gradually altering the representation of the system, either through a series of sampling or other methods (as seen above), and, generally, are capable of better performance compared to standard approaches to the problem.

Conclusion

As can be observed, the inspiration and technical capabilities enabled by quantum computing is exciting on several different levels. The field is able to function under a unique set of capabilities (and constraints) that allow it to explore approaches to problems that would otherwise not have been conceivable on classical computers alone.

Works Cited

Barak, B., Moitra, A., O’Donnell, R., Raghavendra, P., Regev, O., Steurer, D., Trevisan, L., Vijayaraghavan, A., Witmer, D., & Wright, J. (2015, August 11). Beating the random assignment on constraint satisfaction problems of bounded degree. arXiv.org. Retrieved September 21, 2021, from https://arxiv.org/abs/1505.03424.

Childs, A. (n.d.). Overview of adiabatic quantum computation. Retrieved September 20, 2021, from https://www.cs.umd.edu/~amchilds/talks/cifar13-tutorial.pdf.

Farhi, E., Goldstone, J., & Gutmann, S. (2014, November 14). A quantum approximate optimization algorithm. arXiv.org. Retrieved September 21, 2021, from https://arxiv.org/abs/1411.4028.

Farhi, E., Goldstone, J., & Gutmann, S. (2015, June 25). A quantum approximate optimization algorithm applied to a bounded occurrence constraint problem. arXiv.org. Retrieved September 21, 2021, from https://arxiv.org/abs/1412.6062.

Frtibble. (2021, February 1). What is quantum-inspired optimization? – azure quantum. What is quantum-inspired optimization? – Azure Quantum | Microsoft Docs. Retrieved September 21, 2021, from https://docs.microsoft.com/en-us/azure/quantum/optimization-what-is-quantum-optimization.

Parekh, O. D. (2018, June 1). Quantum optimization algorithms. Quantum Optimization Algorithms. (Conference) | OSTI.GOV. Retrieved September 21, 2021, from https://www.osti.gov/servlets/purl/1526360.

Team, T. Q. (2021, August 25). Solving combinatorial optimization problems USING QAOA. Qiskit. Retrieved September 21, 2021, from https://qiskit.org/textbook/ch-applications/qaoa.html.

Wang, R.-S., & Wang, L.-M. (2009, December 24). Maximum cut in fuzzy nature: Models and algorithms. Journal of Computational and Applied Mathematics. Retrieved September 21, 2021, from https://www.sciencedirect.com/science/article/pii/S0377042709008309#:~:text=Besides%20its%20theoretical%20importance%2C%20the,and%20data%20clustering%20%5B3%5D.&text=For%20example%2C%20for%20network%20design,the%20cost%20of%20some%20infrastructure.